Scaling Domain Expertise: What That Actually Looks

For B2B consultancies, agencies, and technology platforms with complex and strategic offerings, growth is ultimately about connecting your expertise to a prospect’s situation.

The principles behind that connection are deeply specific to your domain:

How to frame a proposal around the outcomes that matter to that specific client?

Which offering to lead with depending on what a particular prospect is going through?

Which accounts would genuinely benefit from your positioning and unique selling proposition that your competitors don’t have?

All the companies I work with already have a ton of this knowledge.

The problem is it lives with a few experienced people and doesn’t scale.

It works on selective accounts, but it means growth is tied to their capacity and doesn’t survive turnover. Every account that gets their attention benefits from that depth. Every account that doesn’t – get a generic treatment, or doesn’t get reached at all.

That “depth on a few accounts vs generic treatment” trade-off has held because the processes available couldn’t scale what’s specific to your company.

Playbooks still rely on people who understand how to execute them.

Off-the-shelf AI tools can personalise based on LinkedIn posts, but determining why one of your offerings matters for a specific prospect’s situation and building a bespoke narrative around it requires something different.

What’s changed is that you can now capture how your people think — their judgment, their pattern recognition, the reasoning behind their decisions — into datasets and task-specific context specific enough for AI systems to apply that logic across your full market.

Over the last two years I've been focusing on building systems that capture and scale the expertise that makes companies unique — for my own consultancy and with clients.

What follows in this post is what I’ve learned about what this process actually looks like, where most companies should start, and what it takes to get to a system that delivers the same depth as your best people would bring if they had the time.

NB: Here we don’t talk about AI replacing your ideation or creativity, nor do I try to teach you about your domain — it's about scaling what you already know and giving the same depth to accounts you can't currently reach.

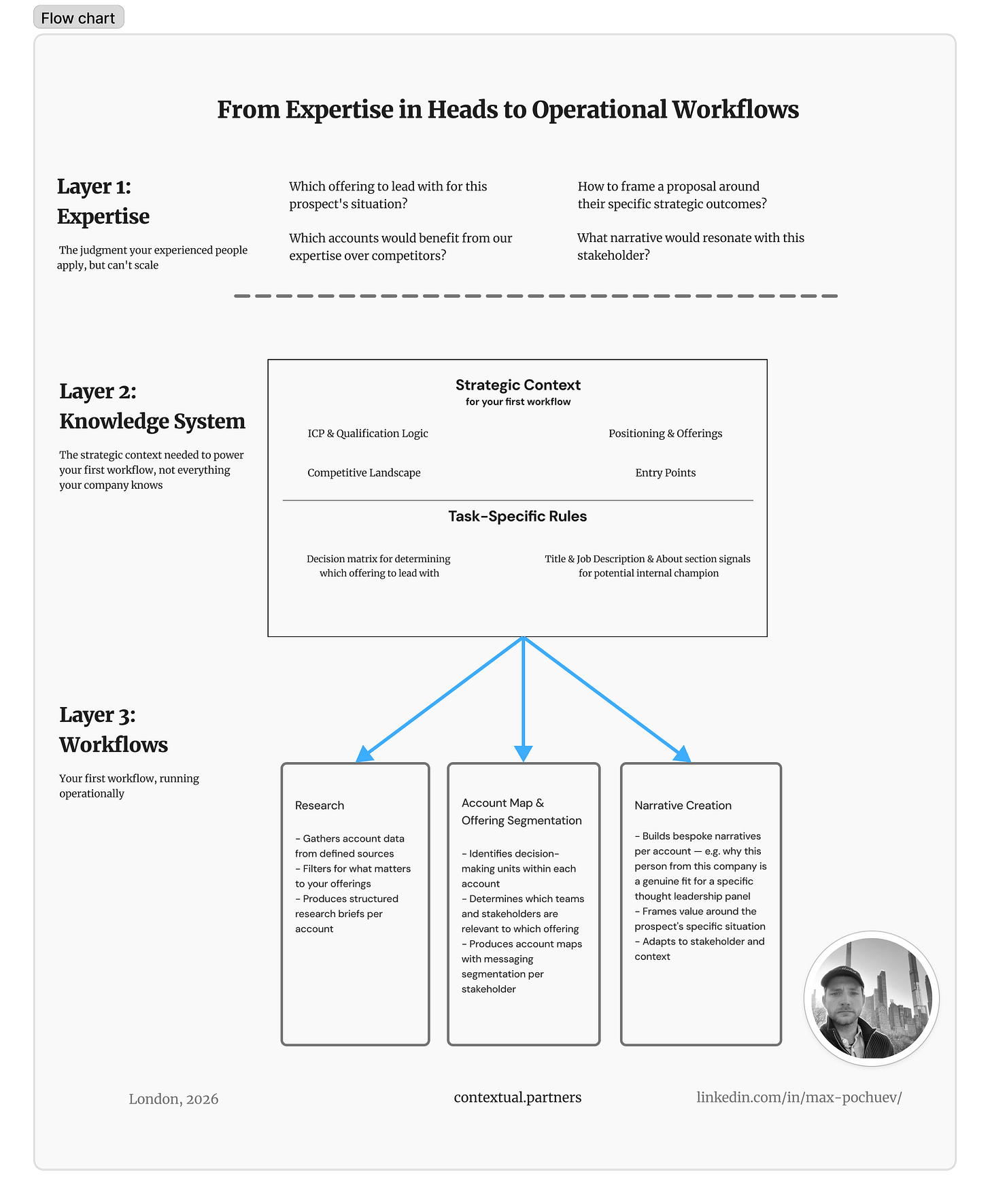

The process of making domain expertise operational has three layers.

The first is the knowledge your people have about your market.

The second is capturing and structuring that knowledge into structured databases with your strategy and task-specific rules and context that are specific enough to be actionable.

The third is the workflows that apply those rules to produce actual outputs — account research, qualification, narrative — across your full market.

Most of the work — and most of what determines whether this succeeds or fails — sits in the second layer. The workflows are the visible output, but they’re only as good as the knowledge system underneath them.

Strategy Alignment and Capturing Your Knowledge

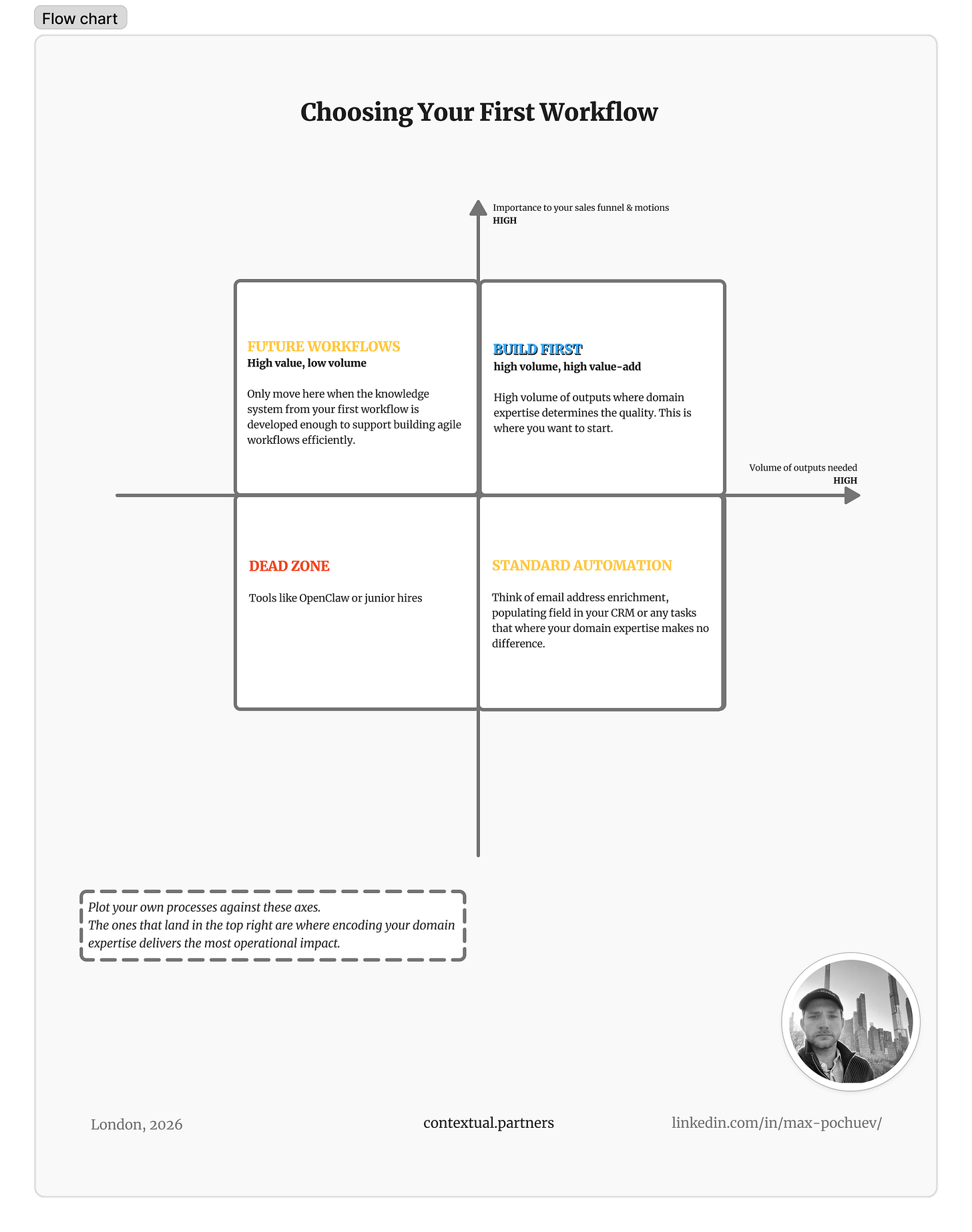

The first step is always about identifying which process to build first.

The right first workflow is the process that sits at the intersection of volume, added value, and required domain expertise — where you need a high volume of outputs and where domain judgment is most important to your sales funnel and GTM motion.

For example, if your offerings can target different teams within an organisation, your people might spend hours reading through LinkedIn profiles, drawing account maps with proper decision-making units, and segmenting which messaging applies to which stakeholder. That’s a high scale, high value-add process.

Or if the starting point of your sales funnel is thought leadership events and your team crafts bespoke narratives about why this specific person from this specific company is a great fit for a particular panel — so the invitation doesn’t read like you’re contacting everyone — that’s another one.

Selecting the right first workflow — and the right sequence after it — is one of the most important factors in whether this succeeds.

The procedure and the actual questions to capture strategic datasets and task-specific process are highly dependent on the process you’re building, but the single framework we always apply at Contextual is reverse-engineering reasoning patterns — either from completing tasks manually with your team or from analysing historical data.

In practical terms, if your first workflow is building a compelling case for why a company should attend your thought leadership event, sit down with your team and go through researching 5-10 great fit companies and what makes them a “great fit” and how you would convey that fit in your outreach.

What you want to achieve is a step-by-step overview of the process where for each stage you have a breakdown of signals, their weight, and their relations to others.

What you need to capture isn’t a linear flowchart — it’s the implications behind each signal and their relationship to others.

One thing that consistently surprises our clients is how valuable this process is on its own — purely from an alignment perspective. We often see that people across the organisation think about the same things from different lenses, and one of the few ways to spot where you have drift at the strategy level is by breaking down processes at the operational level — where the strategy actually shows up in practice.

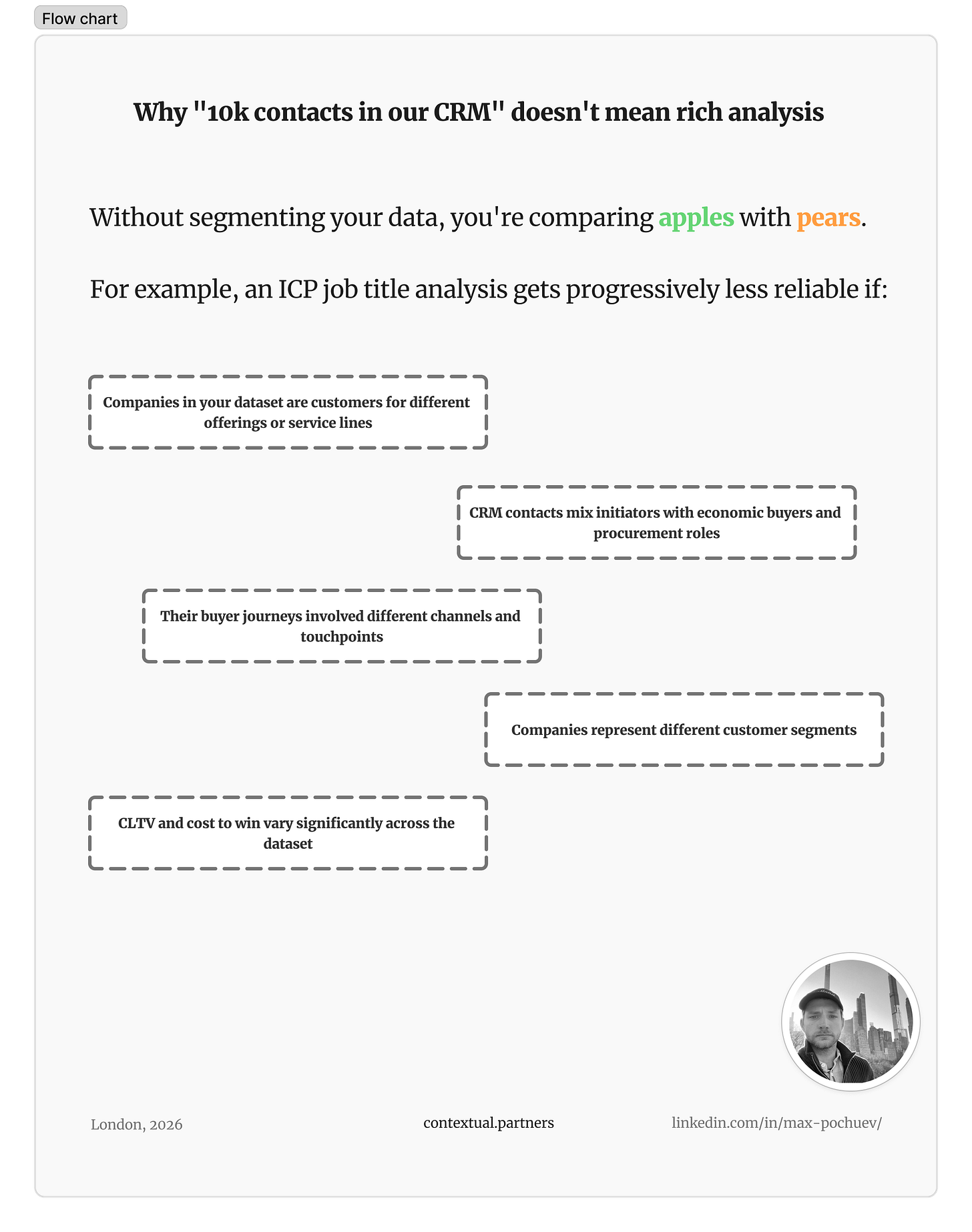

The second source of reasoning patterns is your historical data — CRM records, past proposals, won and lost accounts. But data is only as useful as the segmentation behind it.

A common example I hear from clients: “Let’s do a download of our CRM data to understand what job titles we should be focusing on in our outreach. We have 10k actual client contacts in the CRM, so the data should allow for rich analysis.” This is the kind of oversimplification that may seem more efficient since the data already exists, but it actually risks wasting months before you arrive at the right definition.

The problem is that unsegmented data compares apples with pears. Each of these axes, if not aligned, makes the comparison progressively worse:

When your data is segmented around each axis that matters to your process, it’s gold for analysis — without that segmentation, it produces more noise than insight.

I often think about this in the same way as incrementality tests in digital marketing — but no incrementality test works without volume of experimentation, and in high-value B2B relationships you rarely have that volume available for testing. You need to augment it with individual contextual analysis of your historical data.

A rich deep dive into 10 account relationships — with a detailed trail of “what were the causes of this” for each attribute of that relationship — is far more valuable than an unsegmented comparison of 1,000 accounts.

If you need help determining the right place to start — get in touch.

Operational Outputs

Aligning on the company strategy and capturing how you approach each step is the critical foundation — but if that data ends up in a document that someone still needs to read, interpret, and apply per account, it’s just a more detailed playbook.

The shift is when that structured knowledge stops being a reference and starts powering workflows that produce operational outputs.

The easiest way to think about workflows is a set of automations, data pipelines, and agent-optimised rule files that enable production of actual outputs.

There’s a number of automation, data-orchestration, and agentic tools out there, and when we work with our clients on selecting the right infrastructure, we always start with the existing process. Some questions you can ask your team:

What’s the existing tech stack for this process — especially, where does the data that enables it live?

What parts of the process carry a lot of subjective rationale or are hard to quantify without extensive experiments — and require an additional QA layer or even human in the loop?

What’s the desired output format and end-user experience? End-to-end research and narrative generation is a fundamentally different build from enriching contact data and uploading it to your CRM for your team to use in building touchpoints.

What does the roadmap look like beyond this first workflow?

By the time you ask whether to use Clay, n8n, or Claude Code (or any other tool), you’ll have a much better understanding of what your current process looks like — what data sources and stages are involved — which will give you a better idea of the decision-making criteria for your case. A complete set of recommendations on when to select which tool is outside the scope of this post (you’ll find a ton of information online). What I’m trying to give you is frameworks and examples to help you navigate the process and streamline your approach.

To give you a sense of what this looks like in practice — one of my own workflows assesses company propositions and determines if and where domain expertise is structurally required for proactive motions. This is one workflow (one stage of the process), not the full pipeline.

Building it took rounds of distilling how I think about my market, sharpening the rules and creating task-specific context, and testing outputs against my own judgment. The goal was to reach a point where the workflow consistently produces the same strategic analysis I would produce if I sat down and did it manually for each company.

Here’s what that means in practice. Three companies in a similar space — creative and experience agencies — go through the same workflow.

One is an experience marketing agency with a single integrated service across 12+ unrelated sectors. The workflow determines that in their case domain expertise is required to construct why their specific expertise matters for each prospect’s situation, because every company thinks about this offering in their own ways.

Supported by the case studies, we know that a logistics company activating a sports sponsorship had different entry pain points from a tech company transforming their annual conference, even though both need the same core capability.

Another is a purpose-driven agency with five distinct service areas, from brand building to digital transformation, serving charities and commercial brands. Here the workflow identifies a different bottleneck: which of those five services to lead with depends entirely on what each prospect is going through. The domain expertise required to make that call is the capacity constraint.

A third is a full-service creative agency with a generalist offering. The workflow concludes that domain expertise isn’t structurally required for their growth. Any brand with advertising needs is their potential client, the pitch works uniformly, and the friction in their growth mainly sits in market awareness. That’s not a negative conclusion, it’s a useful one. Around 60% of the prospects I assess land here.

Here’s a short video walking through the full analysis from this stage and the output the workflow produces:

The point is that each answer reflects the same reasoning I would apply — which signals matter, what they suggest about this company’s situation, where the bottleneck actually sits, and what that means for how I’d approach them. This analysis isn’t based on firmographic data. It’s deeply specific to my offerings, my market positioning, and the criteria I’ve developed through years of working in this space.

And because the reasoning is captured, what flows downstream is strategic context that shapes everything after it.

This only becomes possible when you’ve done the work in the previous section — distilling how you think about a specific task, mapping the signals and their weights, building task-specific rules detailed enough that the workflow can make the same judgment calls they would. Without that foundation, you get inconsistent or unusable outputs. With it, you get analysis that your best people would recognise as their own thinking.

What Changes When This Is Working

When your market knowledge and domain expertise is encoded into workflows, every account in your addressable market gets the same depth of analysis and strategic framing that today only happens for the accounts your best people have time for. The accounts that currently get generic treatment — or don’t get reached at all — can now receive the same depth of thinking.

And because the reasoning is captured, not just the outputs, what your team learns feeds back into the system. A new insight about what signals matter, a shift in positioning, a new offering — these update the knowledge system rather than living in someone’s head until they remember to apply them.

But approach this with caution — none of the stages I’ve shared with you today is a weekend project, nor is it set-and-forget.

Building the foundational datasets and task-specific rules requires genuine strategic alignment so that you can capture decisions and reasoning precisely enough for workflows to replicate it. The work for capturing knowledge alone surfaces questions about your positioning and your market that many teams haven’t had to articulate precisely before.

What I’ve covered in this post is the process for a single workflow. What moves this further is when your strategic datasets and workflows become connected — so that an insight at one stage propagates across every related stage. That’s where the knowledge system starts functioning as a living reflection of how your company thinks. That’s what I’ll cover next.

If your team’s domain expertise stays locked in experienced heads, you’re choosing between depth that doesn’t scale and volume that doesn’t reflect what makes you effective.

Our work focuses on capturing domain expertise and building the systems that scale it. If you have clarity on your offerings and want to explore what a first workflow could look like for your specific situation — get in touch.